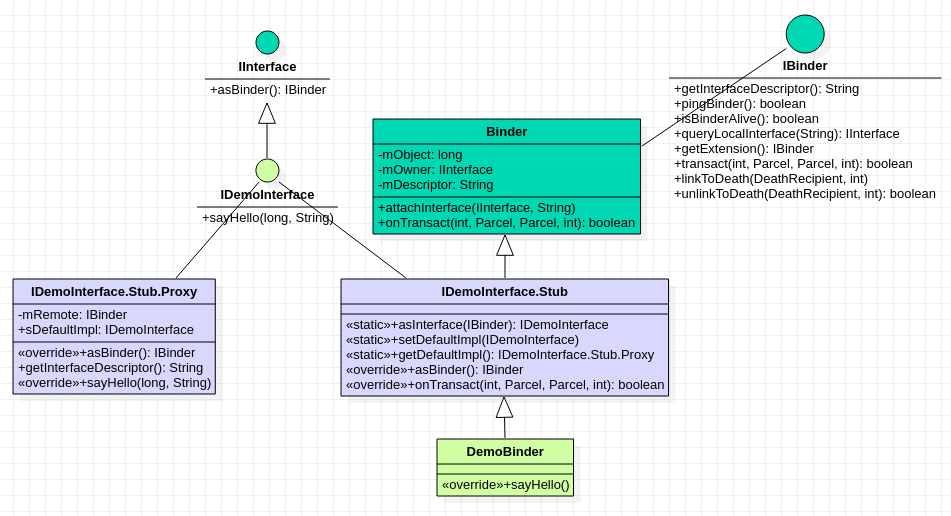

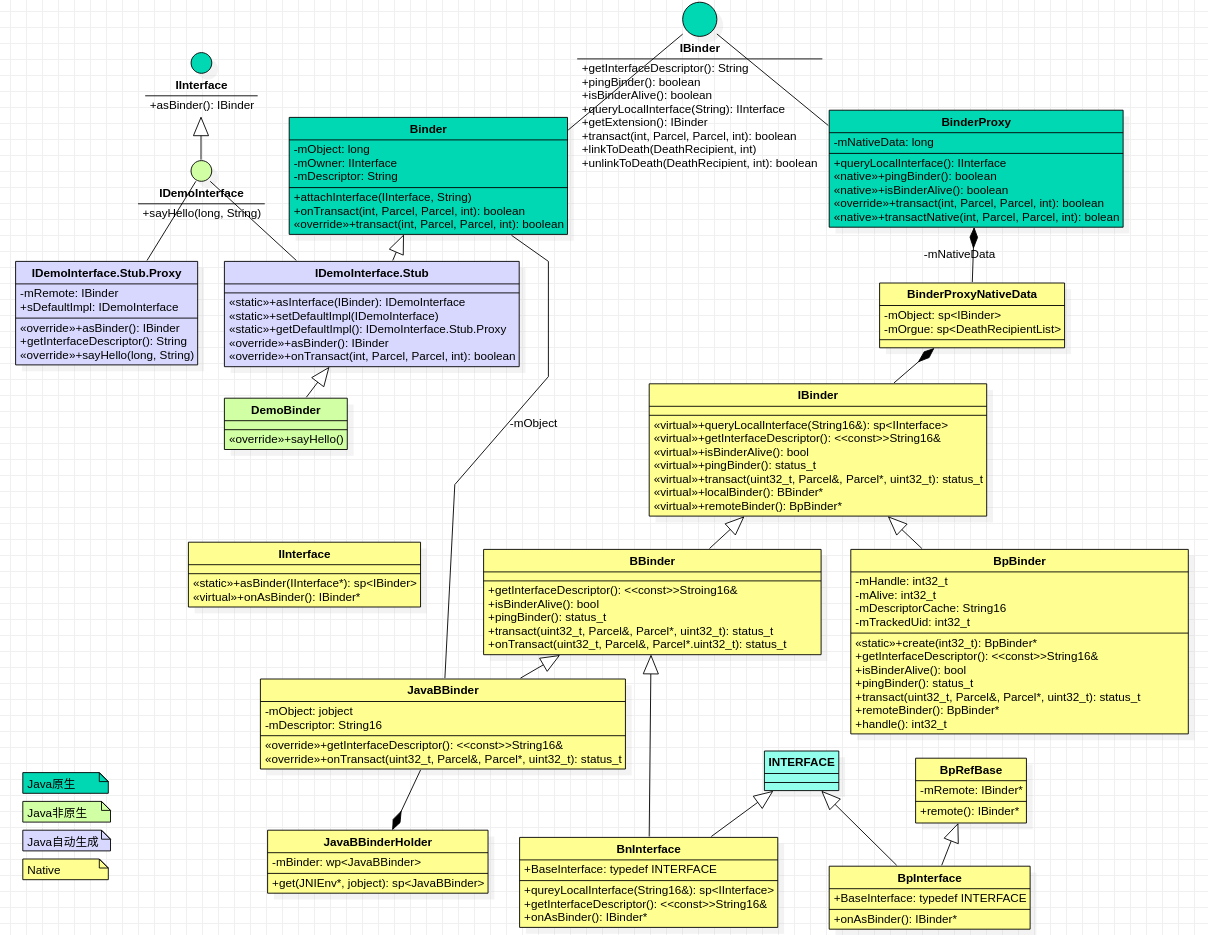

简介 接上文,首先回顾一下IBinder相关接口的类图:

现在我们Client进程已经拿到Server端IDemoInterface中的IBinder对象,但是这个IBinder对象到底是哪个呢,Stub本身?还是Proxy亦或是Proxy中的mRemote?

还是看sayHello的调用过程先:

1 2 3 4 5 6 7 8 9 10 11 12 override fun onServiceConnected (p0: ComponentName ?, p1: IBinder ?) Log.d("Client" , "DemoService connected" ) val mProxyBinder = IDemoInterface.Stub.asInterface(p1) try { mProxyBinder.sayHello(5000 , "Hello?" ) } catch (e:RemoteException) { } }

一. asInterface方法 1.1 IDemoInterface.Stub.asInterface 1 2 3 4 5 6 7 8 9 10 11 12 13 public static com.oneplus.opbench.server.IDemoInterface asInterface (android.os.IBinder obj) { if ((obj==null )) { return null ; } android.os.IInterface iin = obj.queryLocalInterface(DESCRIPTOR); if (((iin!=null )&&(iin instanceof com.oneplus.opbench.server.IDemoInterface))) { return ((com.oneplus.opbench.server.IDemoInterface)iin); } return new com .oneplus.opbench.server.IDemoInterface.Stub.Proxy(obj); }

这个方法是自动生成的,看起来就是通过IBinder生成一个IInterface或者Proxy?

1.2 Binder.queryLocalInterface 1 2 3 4 5 6 7 8 9 10 11 public @Nullable IInterface queryLocalInterface (@NonNull String descriptor) { if (mDescriptor != null && mDescriptor.equals(descriptor)) { return mOwner; } return null ; } public void attachInterface (@Nullable IInterface owner, @Nullable String descriptor) { mOwner = owner; mDescriptor = descriptor; }

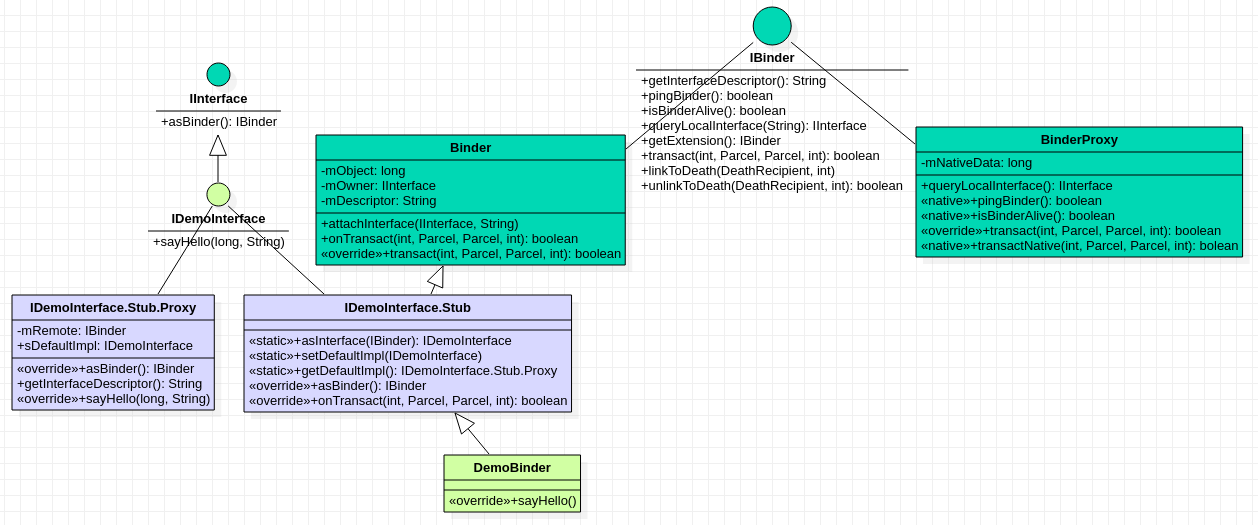

看类图我们也知道,只有Binder实现了IBinder接口,而也只有IDemoInterface.Stub继承了Binder。这么说起来,SystemServer回传的IBinder对象实际上是服务端的IDemoInterface.Stub?然而mOwner此时还是null的,注意我们现在在Client进程中。这里我们直接debug client进程发现queryLocalInterface返回的null值,而且传入的IBinder的类型居然是BinderProxy的!这里什么时候返回非null,传入的IBinder是什么时候变成BinderProxy的呢,先留个疑问。

1.3 创建IDemoInterface.Stub.Proxy对象 1 2 3 4 5 private android.os.IBinder mRemote;Proxy(android.os.IBinder remote) { mRemote = remote; }

明白了,现在这个mRemote对象实际上是指代的BinderProxy!

二. sayHello 2.1 Proxy.sayHello 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 @Override public void sayHello (long aLong, java.lang.String aString) throws android.os.RemoteException{ android.os.Parcel _data = android.os.Parcel.obtain(); android.os.Parcel _reply = android.os.Parcel.obtain(); try { _data.writeInterfaceToken(DESCRIPTOR); _data.writeLong(aLong); _data.writeString(aString); boolean _status = mRemote.transact(Stub.TRANSACTION_sayHello, _data, _reply, 0 ); if (!_status && getDefaultImpl() != null ) { getDefaultImpl().sayHello(aLong, aString); return ; } _reply.readException(); } finally { _reply.recycle(); _data.recycle(); } }

2.2 BinderProxy.transact 1 2 3 4 5 6 public boolean transact (int code, Parcel data, Parcel reply, int flags) throws RemoteException { return transactNative(code, data, reply, flags); }

2.3 android_util_Binder.cpp#android_os_BinderProxy_transact 1 2 3 4 5 6 7 8 9 10 11 static jboolean android_os_BinderProxy_transact (JNIEnv* env, jobject obj, jint code, jobject dataObj, jobject replyObj, jint flags) IBinder* target = getBPNativeData (env, obj)->mObject.get (); status_t err = target->transact (code, *data, reply, flags); }

2.3.1 android_util_Binder.cpp#getBPNativeData 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 struct BinderProxyNativeData { sp<IBinder> mObject; sp<DeathRecipientList> mOrgue; }; static struct binderproxy_offsets_t { jclass mClass; jmethodID mGetInstance; jmethodID mSendDeathNotice; jfieldID mNativeData; } gBinderProxyOffsets; BinderProxyNativeData* getBPNativeData (JNIEnv* env, jobject obj) { return (BinderProxyNativeData *) env->GetLongField (obj, gBinderProxyOffsets.mNativeData); }

这里并不知道BinderProxy从哪儿来的,Native层也没有定义,应该就是指代java层的BinderProxy。

2.3.2 IBinder#transact 1 2 3 4 virtual status_t transact ( uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags = 0 ) 0 ;

这里我们知道getBpNativeData中的mObject是IBinder类型的。但是是一个虚函数,没有具体实现,怎么往下查呢。

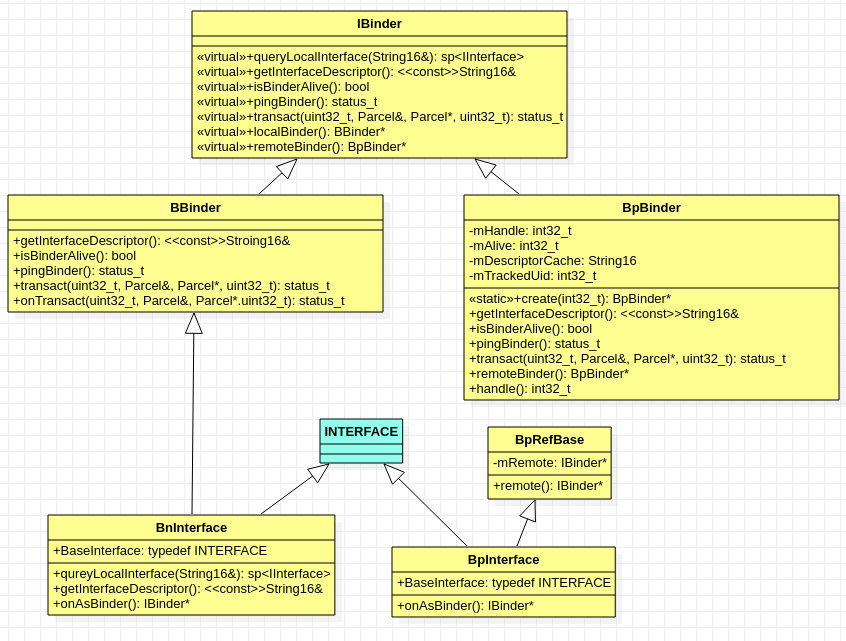

Native的Debug方式也有,但是总归不方便,那我们先梳理一下Native层关于IBinder的类图吧。注意现在我们还是在Client进程内的。

IBinder相关类之间的关系大致理清楚了,从这个函数名称getBPNativeData可以猜出来应该是指代的BpBinder!

但是怎么确认呢,那我们回到Client App和Server App建立通信的过程中,溯源BinderProxy。

三. BinderProxy的创建过程 3.1 publishServiceLocked

ServerApp: ActivityThread.handleBindService(BindServiceData data)

SystemServer: ActivityManagerService.publishService(IBinder token, Intent intent, IBinder service)

SystemServer: ActiveService.publishServiceLocked(ServiceRecord r, Intent intent, IBinder service)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 private void handleBindService (BindServiceData data) { Service s = mServices.get(data.token); if (DEBUG_SERVICE) Slog.v(TAG, "handleBindService s=" + s + " rebind=" + data.rebind); if (s != null ) { try { data.intent.setExtrasClassLoader(s.getClassLoader()); data.intent.prepareToEnterProcess(); try { if (!data.rebind) { IBinder binder = s.onBind(data.intent); ActivityManager.getService().publishService( data.token, data.intent, binder); } else { s.onRebind(data.intent); ActivityManager.getService().serviceDoneExecuting( data.token, SERVICE_DONE_EXECUTING_ANON, 0 , 0 ); } } } }

3.1.1 服务端的Stub初始化 1 2 3 4 5 6 7 8 9 10 11 12 13 14 class DemoBinder :IDemoInterface.Stub override fun sayHello (aLong: Long , aString: String ?) Log.d("DemoService" , "$aString :$aLong " ) } } private val binder = DemoBinder()override fun onBind (intent: Intent ?) return binder }

还要注意的是,初始化DemoBinder过程,会调用父类的构造函数哦:

1 2 3 4 5 6 7 8 9 10 11 public Stub () { this .attachInterface(this , DESCRIPTOR); } public void attachInterface (@Nullable IInterface owner, @Nullable String descriptor) { mOwner = owner; mDescriptor = descriptor; }

但是这里还是没有找到BinderProxy对象的创建。不过我们知道,Binder通信是通过往Parcel中写入数据的;

而AMS.publishService函数中最后一个参数就是IBinder类型的。

3.2 publishService写入参数 通过查看IActivityManager.aidl文件编译后生成的IActivityManager.Stub.Proxy.class(想想为啥不是Stub而是Proxy)文件可以知道这个函数的具体内容:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 public void publishService (IBinder token, Intent intent, IBinder service) throws RemoteException { Parcel _data = Parcel.obtain(); Parcel _reply = Parcel.obtain(); try { _data.writeInterfaceToken("android.app.IActivityManager" ); _data.writeStrongBinder(token); if (intent != null ) { _data.writeInt(1 ); intent.writeToParcel(_data, 0 ); } else { _data.writeInt(0 ); } _data.writeStrongBinder(service); boolean _status = this .mRemote.transact(32 , _data, _reply, 0 ); if (!_status && IActivityManager.Stub.getDefaultImpl() != null ) { IActivityManager.Stub.getDefaultImpl().publishService(token, intent, service); return ; } _reply.readException(); } finally { _reply.recycle(); _data.recycle(); } }

3.2.1 Parcel.writeStrongBinder 1 2 3 4 public final void writeStrongBinder (IBinder val) { nativeWriteStrongBinder(mNativePtr, val); }

看样子直接去了Native层干活去了。

3.2.2 android_os_Parcel#android_os_Parcel_writeStrongBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 static void android_os_Parcel_writeStrongBinder (JNIEnv* env, jclass clazz, jlong nativePtr, jobject object) Parcel* parcel = reinterpret_cast <Parcel*>(nativePtr); if (parcel != NULL ) { const status_t err = parcel->writeStrongBinder (ibinderForJavaObject (env, object)); if (err != NO_ERROR) { signalExceptionForError (env, clazz, err); } } }

3.2.2.1 android_util_Binder#ibinderForJavaObject 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 sp<IBinder> ibinderForJavaObject (JNIEnv* env, jobject obj) if (obj == NULL ) return NULL ; if (env->IsInstanceOf (obj, gBinderOffsets.mClass)) { JavaBBinderHolder* jbh = (JavaBBinderHolder*) env->GetLongField (obj, gBinderOffsets.mObject); return jbh->get (env, obj); } if (env->IsInstanceOf (obj, gBinderProxyOffsets.mClass)) { return getBPNativeData (env, obj)->mObject; } ALOGW ("ibinderForJavaObject: %p is not a Binder object" , obj); return NULL ; }

这块首次出现了BinderProxy,不过当前我们还处于Service App进程对吧,而且上层传入的IBinder明显只是一个IDemoInterface.Stub类型。

所以这里还是将这个jobject转换成了JavaBBinderHolder!不过我们有理由猜测,BinderProxy是在AMS所处的SystemServer进程中转换的。

3.2.2.2 android_util_Binder#JavaBBinderHolder#get 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 sp<JavaBBinder> get (JNIEnv* env, jobject obj) AutoMutex _l(mLock); sp<JavaBBinder> b = mBinder.promote (); if (b == NULL ) { b = new JavaBBinder (env, obj); if (mVintf) { ::android::internal::Stability::markVintf (b.get ()); } if (mExtension != nullptr ) { b.get ()->setExtension (mExtension); } mBinder = b; ALOGV ("Creating JavaBinder %p (refs %p) for Object %p, weakCount=%" PRId32 "\n" , b.get (), b->getWeakRefs (), obj, b->getWeakRefs ()->getWeakCount ()); } return b; }

3.2.2.3 创建Native层的IBinder对象-JavaBBinder 1 2 3 4 5 6 7 8 JavaBBinder (JNIEnv* env, jobject object) : mVM (jnienv_to_javavm (env)), mObject (env->NewGlobalRef (object)) { ALOGV ("Creating JavaBBinder %p\n" , this ); gNumLocalRefsCreated.fetch_add (1 , std::memory_order_relaxed); gcIfManyNewRefs (env); }

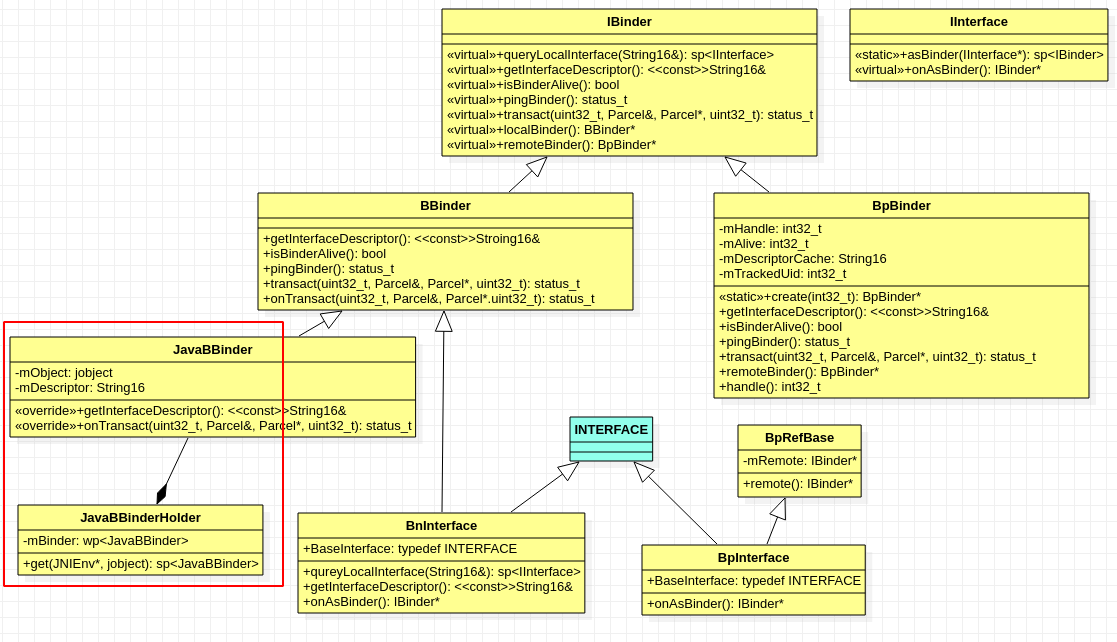

我们在看看现在Native层和IBinder有关系的类的类图:

果然JavaBBinder继承了IBinder。回到#3.2.2中,继续往下就是writeStrongBinder了。

3.2.3 Parcel#writeStrongBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 status_t Parcel::writeStrongBinder (const sp<IBinder>& val) return flattenBinder (val); } status_t Parcel::flattenBinder (const sp<IBinder>& binder) flat_binder_object obj; if (binder != nullptr ) { BBinder *local = binder->localBinder (); if (!local) { BpBinder *proxy = binder->remoteBinder (); if (proxy == nullptr ) { ALOGE ("null proxy" ); } const int32_t handle = proxy ? proxy->handle () : 0 ; obj.hdr.type = BINDER_TYPE_HANDLE; obj.binder = 0 ; obj.handle = handle; obj.cookie = 0 ; } else { if (local->isRequestingSid ()) { obj.flags |= FLAT_BINDER_FLAG_TXN_SECURITY_CTX; } obj.hdr.type = BINDER_TYPE_BINDER; obj.binder = reinterpret_cast <uintptr_t >(local->getWeakRefs ()); obj.cookie = reinterpret_cast <uintptr_t >(local); } } else { obj.hdr.type = BINDER_TYPE_BINDER; obj.binder = 0 ; obj.cookie = 0 ; } return finishFlattenBinder (binder, obj); }

这里对local和remote存在不同的处理方式,不过我们先仅仅关注其中一个分支。

3.2.3.1 IBinder#localBinder 我们这里的IBinder就是JavaBBinder类的对象,前面我们看过了JavaBBinder的初始化

1 2 3 4 5 6 7 8 9 10 BBinder* IBinder::localBinder () return nullptr ; } BBinder* BBinder::localBinder () return this ; }

因为这里的IBinder指针是指向其子类的子类JavaBBinder,然后其子类BBinder实现了虚函数localBinder,而JavaBBinder并没有。

所以这里是local的!

3.2.4 Parcel#finishFlattenBinder flatten的意思是压平,其实可以理解为打包,将JavaBBinder打包然后发送出去。

1 2 3 4 5 6 7 8 9 10 11 status_t Parcel::finishFlattenBinder ( const sp<IBinder>& binder, const flat_binder_object& flat) status_t status = writeObject (flat, false ); if (status != OK) return status; internal::Stability::tryMarkCompilationUnit (binder.get ()); return writeInt32 (internal::Stability::get (binder.get ())); }

到这里我们知道了Java层的IBinder对象是如何通过Parcel保存到Native中的。

3.3 SystemServer接收参数 这里我们先不去细究通信的过程,因为这本身就是一次Binder通信。先看AMS收到Server App发布Service的IBinder对象是什么。

还是通过看编译后生成的IActivityManager.Stub.Class文件中对应的publishService方法,注意我们现在切换到了SystemServer进程(忽略进程切换过程)。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 public boolean onTransact (int code, Parcel data, Parcel reply, int flags) throws RemoteException { data.enforceInterface(descriptor); iBinder11 = data.readStrongBinder(); if (0 != data.readInt()) { intent6 = (Intent)Intent.CREATOR.createFromParcel(data); } else { intent6 = null ; } iBinder26 = data.readStrongBinder(); publishService(iBinder11, intent6, iBinder26); reply.writeNoException(); return true ; }

3.3.1 Parcel.readStrongBinder 1 2 3 public final IBinder readStrongBinder () { return nativeReadStrongBinder(mNativePtr); }

还是直接切到Native.

3.3.2 android_os_Parcel#android_os_Parcel_readStrongBinder 1 2 3 4 5 6 7 8 9 10 static jobject android_os_Parcel_readStrongBinder (JNIEnv* env, jclass clazz, jlong nativePtr) Parcel* parcel = reinterpret_cast <Parcel*>(nativePtr); if (parcel != NULL ) { return javaObjectForIBinder (env, parcel->readStrongBinder ()); } return NULL ; }

3.3.2.1 Parcel#readStrongBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 sp<IBinder> Parcel::readStrongBinder () const sp<IBinder> val; readNullableStrongBinder (&val); return val; } status_t Parcel::readStrongBinder (sp<IBinder>* val) const status_t status = readNullableStrongBinder (val); if (status == OK && !val->get ()) { status = UNEXPECTED_NULL; } return status; } status_t Parcel::readNullableStrongBinder (sp<IBinder>* val) const return unflattenBinder (val); }

3.3.2.2 Parcel#unflattenBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 status_t Parcel::unflattenBinder (sp<IBinder>* out) const const flat_binder_object* flat = readObject (false ); if (flat) { switch (flat->hdr.type) { case BINDER_TYPE_BINDER: { sp<IBinder> binder = reinterpret_cast <IBinder*>(flat->cookie); return finishUnflattenBinder (binder, out); } case BINDER_TYPE_HANDLE: { sp<IBinder> binder = ProcessState::self ()->getStrongProxyForHandle (flat->handle); return finishUnflattenBinder (binder, out); } } } return BAD_TYPE; }

这个地方留个心眼哦,这里的flat->hdr.type真的还是原来的BINDER_TYPE_BINDER吗?

3.3.2.3 Parcel#finishUnflattenBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 status_t Parcel::finishUnflattenBinder ( const sp<IBinder>& binder, sp<IBinder>* out) const int32_t stability; status_t status = readInt32 (&stability); if (status != OK) return status; status = internal::Stability::set (binder.get (), stability, true ); if (status != OK) return status; *out = binder; return OK; }

所以这个IBinder到底是什么类型的呢?先回到#3.3.2中,继续readStrongBinder的过程

3.3.3 android_util_Binder#javaObjectForIBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 jobject javaObjectForIBinder (JNIEnv* env, const sp<IBinder>& val) if (val->checkSubclass (&gBinderOffsets)) { jobject object = static_cast <JavaBBinder*>(val.get ())->object (); LOGDEATH ("objectForBinder %p: it's our own %p!\n" , val.get (), object); return object; } BinderProxyNativeData* nativeData = new BinderProxyNativeData (); nativeData->mOrgue = new DeathRecipientList; nativeData->mObject = val; jobject object = env->CallStaticObjectMethod (gBinderProxyOffsets.mClass, gBinderProxyOffsets.mGetInstance, (jlong) nativeData, (jlong) val.get ()); return object; }

3.3.3.1 IBinder#checkSubclass 1 2 3 4 5 6 7 8 9 10 11 bool IBinder::checkSubclass (const void * ) const return false ; } bool checkSubclass (const void * subclassID) const return subclassID == &gBinderOffsets; }

这里思考一个问题,注意我们现在已经处于SystemServer进程了,这里的IBinder指向一块内存区域,是从Server App中拷贝而来的,与JavaBBinder数据保持一致。

那我们强制转换转成了IBinder,然后调用IBinder中的函数是调用IBinder中函数实现还是JavaBBinder中的函数实现呢?

3.3.3.2 android_util_Binder#int_register_android_os_Binder 首先gBinderOffsets是bindernative_offsets_t类型的结构体,其在int_register_android_os_Binder中被填充数据。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 static int int_register_android_os_Binder (JNIEnv* env) jclass clazz = FindClassOrDie (env, kBinderPathName); gBinderOffsets.mClass = MakeGlobalRefOrDie (env, clazz); gBinderOffsets.mExecTransact = GetMethodIDOrDie (env, clazz, "execTransact" , "(IJJI)Z" ); gBinderOffsets.mGetInterfaceDescriptor = GetMethodIDOrDie (env, clazz, "getInterfaceDescriptor" , "()Ljava/lang/String;" ); gBinderOffsets.mObject = GetFieldIDOrDie (env, clazz, "mObject" , "J" ); return RegisterMethodsOrDie ( env, kBinderPathName, gBinderMethods, NELEM (gBinderMethods)); }

我们知道,在Java中,每个进程都有自己的虚拟机环境,所以对于不同进程来说,JNIEnv肯定也是不同的,这就导致gBinderOffsets也是不同的。

所以对于3.3.3.1中checkSubclass来说,返回的就是false了。

3.3.4 创建BinderProxyNativeData 1 2 3 4 5 6 7 8 9 10 11 struct BinderProxyNativeData { sp<IBinder> mObject; sp<DeathRecipientList> mOrgue; };

哦噢,空欢喜?这里只是一个结构体,将IBinder和DeathRecipientList组合起来。

3.3.5 gBinderProxyOffsets.mGetInstance => BinderProxy.getInstance 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 private static BinderProxy getInstance (long nativeData, long iBinder) { BinderProxy result; synchronized (sProxyMap) { try { result = sProxyMap.get(iBinder); if (result != null ) { return result; } result = new BinderProxy (nativeData); } catch (Throwable e) { NativeAllocationRegistry.applyFreeFunction(NoImagePreloadHolder.sNativeFinalizer, nativeData); throw e; } NoImagePreloadHolder.sRegistry.registerNativeAllocation(result, nativeData); sProxyMap.set(iBinder, result); } return result; } private BinderProxy (long nativeData) { mNativeData = nativeData; }

通过JNI调用到Java方法,最终生成BinderProxy对象。

四. 总结 到这里我们就理清了BinderProxy这个是怎么来的了。总结一下:

Server App实现Service的onBind方法,返回一个IBinder对象,这个IBinder对象是继承了某个aidl接口的Stub类,记为StubIBinder

Server App调用publishService,将StubIBinder通过一系列方法,在Native层转成JavaBBinder,保存在Parce的内存区域中

Binder驱动拷贝Parcel并将其传给SystemServer进程(这个过程我们稍后分析)

SystemServer接收到Parcel内存数据,将其中JavaBBinder所在的内存区域强制转成IBinder类型,并将其保存为Java层的BinderProxy对象

同理我们知道Client App通过调用bindService获取了一个IBinder对象,那这个IBinder对象也是BinderProxy类型的, 只不过这里有点点小差异:

SystemServer通过IServiceConnection向Client App发送Server App注册的BinderProxy对象时,在Parcel.writeStrongBinder过程中,存入cookie的是BinderProxy:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 sp<IBinder> ibinderForJavaObject (JNIEnv* env, jobject obj) if (obj == NULL ) return NULL ; if (env->IsInstanceOf (obj, gBinderOffsets.mClass)) { JavaBBinderHolder* jbh = (JavaBBinderHolder*) env->GetLongField (obj, gBinderOffsets.mObject); return jbh->get (env, obj); } if (env->IsInstanceOf (obj, gBinderProxyOffsets.mClass)) { return getBPNativeData (env, obj)->mObject; } ALOGW ("ibinderForJavaObject: %p is not a Binder object" , obj); return NULL ; }

那么在接下来打包flattenBinder的过程中, type 的类型其实是 BINDER_TYPE_HANDLE

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 status_t Parcel::flattenBinder (const sp<IBinder>& binder) BBinder *local = binder->localBinder (); if (!local) { BpBinder *proxy = binder->remoteBinder (); if (proxy == nullptr ) { ALOGE ("null proxy" ); } const int32_t handle = proxy ? proxy->handle () : 0 ; obj.hdr.type = BINDER_TYPE_HANDLE; obj.binder = 0 ; obj.handle = handle; obj.cookie = 0 ; } else { if (local->isRequestingSid ()) { obj.flags |= FLAT_BINDER_FLAG_TXN_SECURITY_CTX; } obj.hdr.type = BINDER_TYPE_BINDER; obj.binder = reinterpret_cast <uintptr_t >(local->getWeakRefs ()); obj.cookie = reinterpret_cast <uintptr_t >(local); } }

4.1 新的疑问 哦吼,新的问题来了,这个binder->localBinder()调用的到底是那个方法呢?IBinder, BBinder中都有实现。

如果是直接拷贝的话,那么按理说也是会调用到BBinder的localBinder,这样这里还是个local!

如果拷贝后在新进程中调用的是IBinder的localBinder,那么接下来的remoteBinder也是IBinder的,还是返回null!

这样也说不通,因为如果remoteBinder返回的也是空,那么打包后传入的信息就不能识别Server App的IDemoInterface了。

这里曾困扰我一段时间,这个IBinder到底是什么是我们理解Binder通信的基础。

按照网上一大堆的文章来说,从Binder通信到了另一个进程,那么BBinder就会转成了BpBinder。

然而我们梳理了上层代码,并没有发现这个转变过程。在Parcel整个压缩和解包的过程中都没有发现将IBinder强制转成BpBinder。

4.2 binder驱动 其实这里是在驱动层做的处理:android/kernel/msm-4.19/drivers/android/binder.c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 static void binder_transaction (struct binder_proc *proc, struct binder_thread *thread, struct binder_transaction_data *tr, int reply, binder_size_t extra_buffers_size) for (buffer_offset = off_start_offset; buffer_offset < off_end_offset; buffer_offset += sizeof (binder_size_t )) { switch (hdr->type) { case BINDER_TYPE_BINDER: case BINDER_TYPE_WEAK_BINDER: { struct flat_binder_object *fp; fp = to_flat_binder_object (hdr); ret = binder_translate_binder (fp, t, thread); } } static int binder_translate_binder (struct flat_binder_object *fp, struct binder_transaction *t, struct binder_thread *thread) if (fp->hdr.type == BINDER_TYPE_BINDER) fp->hdr.type = BINDER_TYPE_HANDLE; else fp->hdr.type = BINDER_TYPE_WEAK_HANDLE; }

在驱动层传输数据的时候会加工一次!怪不得上层找不到任何信息,这个可真的是太容易误导了。

4.3 SystemServer => Parcel#unflattenBinder 所以在 #3.3.2.2 中 Parcel#unflattenBinder 解包数据时,对IBinder对象的处理是另外一条路径了:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 status_t Parcel::unflattenBinder (sp<IBinder>* out) const const flat_binder_object* flat = readObject (false ); if (flat) { switch (flat->hdr.type) { case BINDER_TYPE_BINDER: { sp<IBinder> binder = reinterpret_cast <IBinder*>(flat->cookie); return finishUnflattenBinder (binder, out); } case BINDER_TYPE_HANDLE: { sp<IBinder> binder = ProcessState::self ()->getStrongProxyForHandle (flat->handle); return finishUnflattenBinder (binder, out); } } } return BAD_TYPE; }

这里有个疑问吼,我们看这个flat_binder_object结构的内容:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 typedef __u32 binder_size_t ;struct flat_binder_object { struct binder_object_header hdr ; __u32 flags; union { binder_uintptr_t binder; __u32 handle; }; binder_uintptr_t cookie; };

这个handle是一个__u32类型的数据,说明该变量占4字节。

4.3.1 ProcessState#getStrongProxyForHandle(int32_t handle) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 sp<IBinder> ProcessState::getStrongProxyForHandle (int32_t handle) sp<IBinder> result; AutoMutex _l(mLock); handle_entry* e = lookupHandleLocked (handle); if (e != nullptr ) { IBinder* b = e->binder; if (b == nullptr || !e->refs->attemptIncWeak (this )) { if (handle == 0 ) { Parcel data; status_t status = IPCThreadState::self ()->transact ( 0 , IBinder::PING_TRANSACTION, data, nullptr , 0 ); if (status == DEAD_OBJECT) return nullptr ; } b = BpBinder::create (handle); e->binder = b; if (b) e->refs = b->getWeakRefs (); result = b; } else { result.force_set(b); e->refs->decWeak (this ); } } return result; }

4.3.2 ProcessState#lookupHandleLocked 1 2 3 4 5 6 7 8 9 10 11 12 13 ProcessState::handle_entry* ProcessState::lookupHandleLocked (int32_t handle) const size_t N=mHandleToObject.size (); if (N <= (size_t )handle) { handle_entry e; e.binder = nullptr ; e.refs = nullptr ; status_t err = mHandleToObject.insertAt (e, N, handle+1 -N); if (err < NO_ERROR) return nullptr ; } return &mHandleToObject.editItemAt (handle); }

这里传入的handle其实是Server App在打包数据时,获取的:obj.binder = reinterpret_cast(local->getWeakRefs()), 然后经过驱动加工,可以视为BBinder的映射BpBinder的Token. 这里的细节我们后面分析。

一般来说每个BBinder都不一样(为什么呢,涉及到binder驱动以及Android的智能指针),所以,这里的mHandleToObject是缓存IBinder对象,防止频繁创建销毁消耗资源。

4.3.3 创建BpBinder 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 BpBinder* BpBinder::create (int32_t handle) { int32_t trackedUid = -1 ; if (sCountByUidEnabled) { trackedUid = IPCThreadState::self ()->getCallingUid (); AutoMutex _l(sTrackingLock); uint32_t trackedValue = sTrackingMap[trackedUid]; if (CC_UNLIKELY (trackedValue & LIMIT_REACHED_MASK)) { if (sBinderProxyThrottleCreate) { return nullptr ; } } else { if ((trackedValue & COUNTING_VALUE_MASK) >= sBinderProxyCountHighWatermark) { ALOGE ("Too many binder proxy objects sent to uid %d from uid %d (%d proxies held)" , getuid (), trackedUid, trackedValue); sTrackingMap[trackedUid] |= LIMIT_REACHED_MASK; if (sLimitCallback) sLimitCallback (trackedUid); if (sBinderProxyThrottleCreate) { ALOGI ("Throttling binder proxy creates from uid %d in uid %d until binder proxy" " count drops below %d" , trackedUid, getuid (), sBinderProxyCountLowWatermark); return nullptr ; } } } sTrackingMap[trackedUid]++; } return new BpBinder (handle, trackedUid); } BpBinder::BpBinder (int32_t handle, int32_t trackedUid) : mHandle (handle) , mStability (0 ) , mAlive (1 ) , mObitsSent (0 ) , mObituaries (nullptr ) , mTrackedUid (trackedUid) { ALOGV ("Creating BpBinder %p handle %d\n" , this , mHandle); extendObjectLifetime (OBJECT_LIFETIME_WEAK); IPCThreadState::self ()->incWeakHandle (handle, this ); }

最后补上一张IBinder类图:

了解了IBinder转换过程的原理,BinderProxy的生成过程,也知道了BBinder和BpBinder的映射关系,接下来就是在Client App和Server App之间的通信了。

参考资料

Android Binder详解 https://mr-cao.gitbooks.io/android/content/android-binder.html

msm-4.14 Code https://github.com/android-linux-stable/msm-4.14/blob/9c4b6ed1b229cfc35e5c3e5815e297b7f519cf93/drivers/android/binder.c